TL;DR: Just ask the LLM “from where to where,” and let the server retrieve the text directly. Measured on 3 pages: 90% fewer output tokens, 87% lower latency, 61% cost savings.

Background: From Docling to PyMuPDF + VLM

While building an AI system for architecture regulation review, we needed to split architecture notice/guideline PDFs into semantically meaningful chunks. These chunks serve as retrieval units in a RAG pipeline.

We initially used IBM’s Docling, which uses OCR models to analyze document structure before chunking. However, we ran into two problems:

- Heavyweight: The inclusion of OCR and layout analysis models (RT-DETR, etc.) resulted in large Docker image sizes and long processing times

- Hard to customize: The internal pipeline was essentially a black box, making it difficult to finely control the hierarchical structures unique to architecture documents (chapter > article > paragraph > item) or table handling

So we removed the OCR model dependency and switched to extracting text and font metadata directly with PyMuPDF. By replacing structural analysis with a multimodal LLM (VLM) Vision capability, we completely eliminated the OCR dependency while gaining full customization freedom.

PDF → PyMuPDF Text Extraction → Page Classification → LLM Structural Analysis → Chunk Creation → Embedding

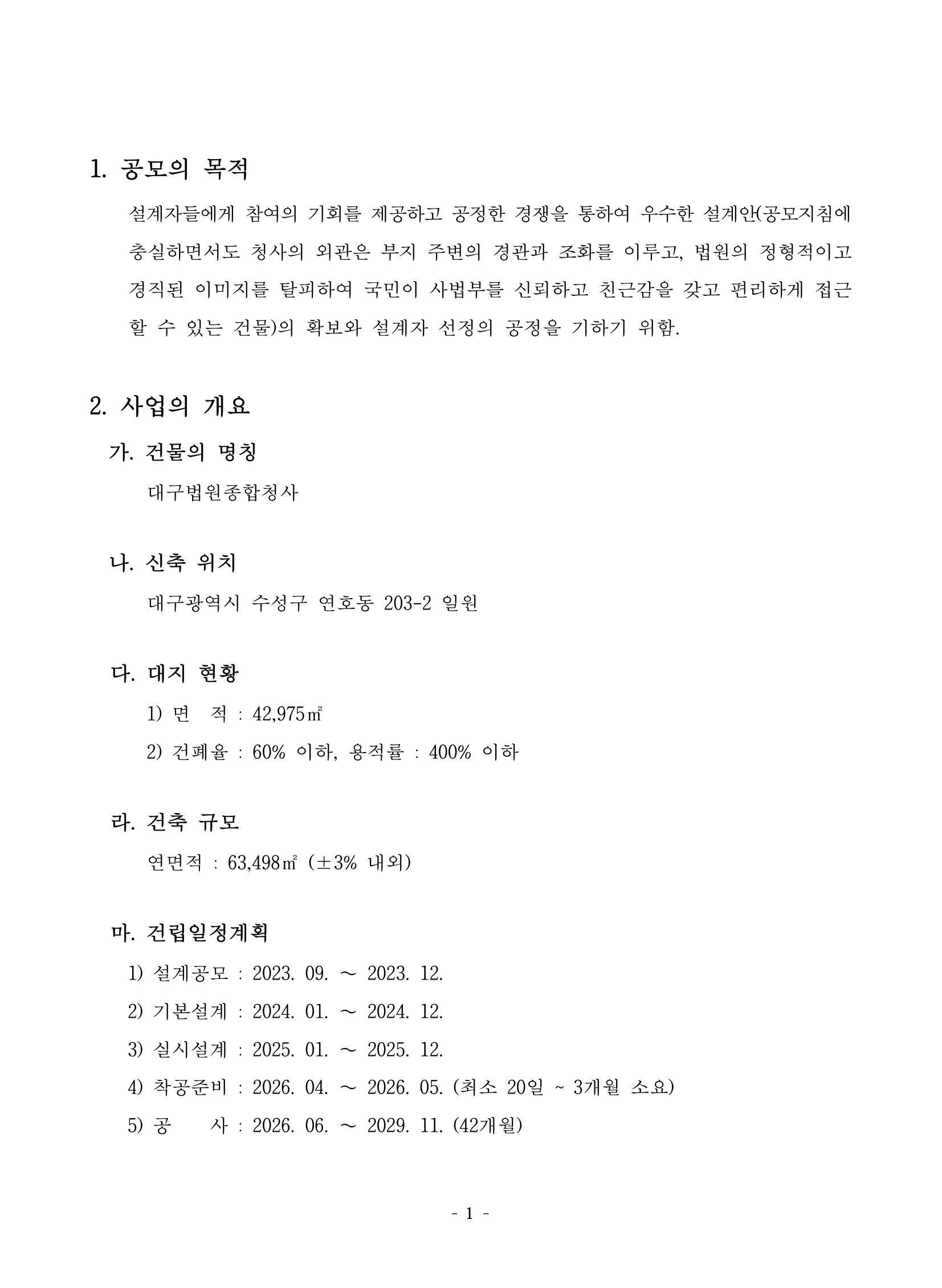

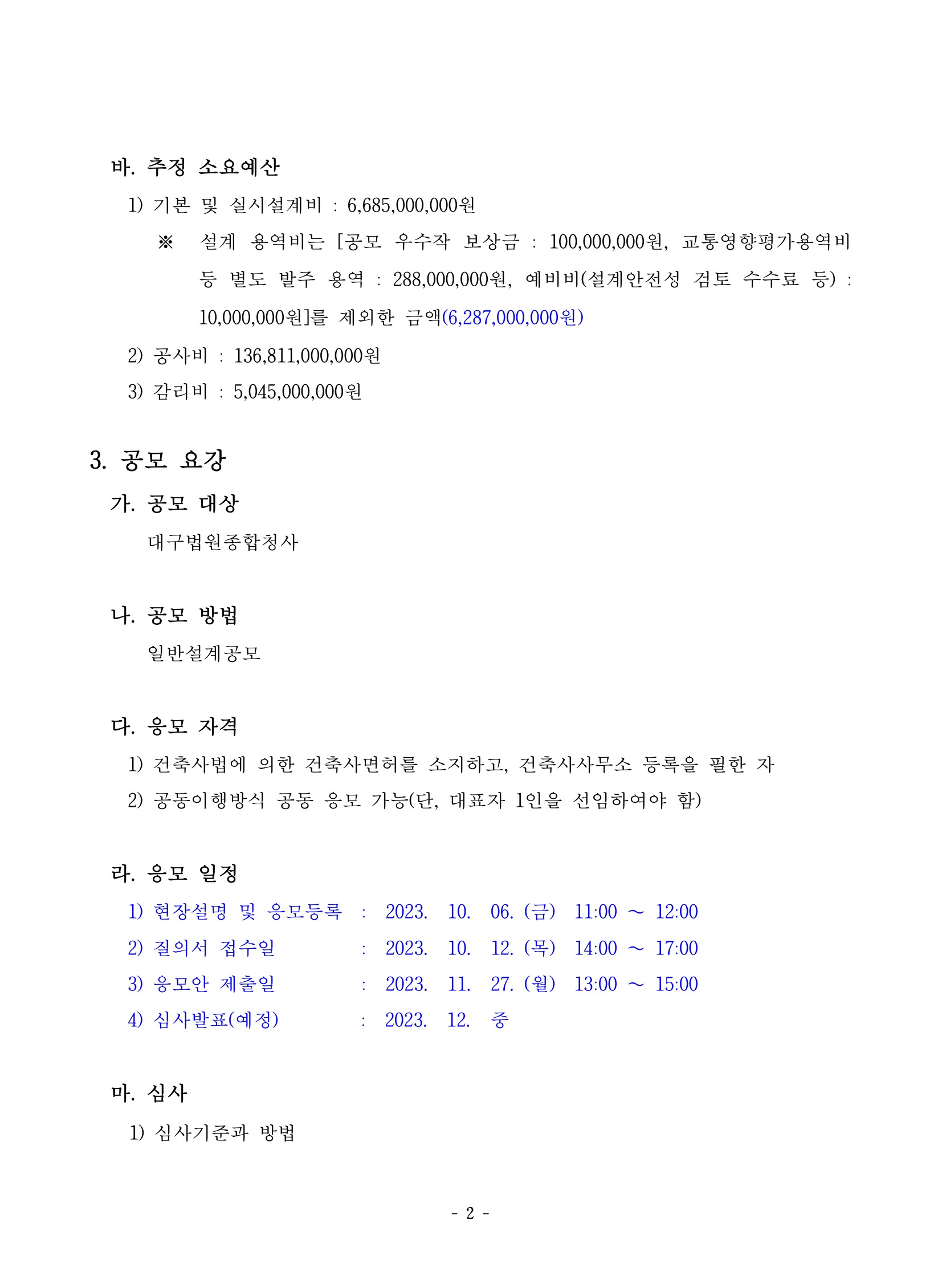

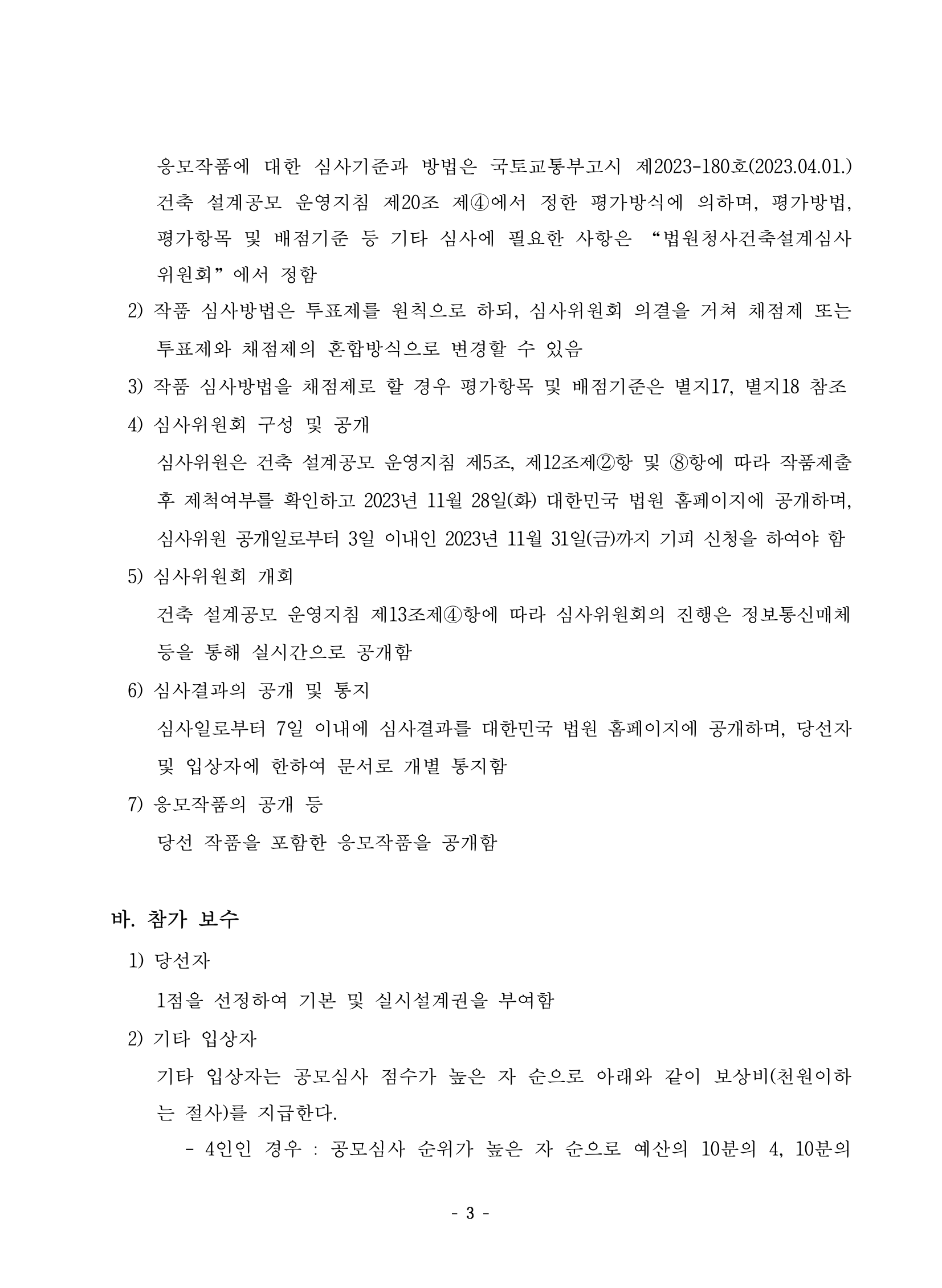

Below are three body pages from the actual PDF we processed — the Daegu Court Complex design competition guidelines.

| Page 3 | Page 4 | Page 5 |

|---|---|---|

|

|

|

The body text contains hierarchical section structures such as “1. Purpose of the Competition,” “2. Project Overview (a–e),” and “3. Competition Guidelines (a–e).” The goal is to have the LLM automatically split this into semantic units — and that’s where a new problem emerged.

The Problem: LLM Output Token Cost

With LLM APIs, output token pricing is 3–5x higher than input.

| Model | Input (/1M tokens) | Output (/1M tokens) | Multiplier |

|---|---|---|---|

| Claude Haiku 4.5 | $1.00 | $5.00 | 5x |

| Claude Sonnet 4.6 | $3.00 | $15.00 | 5x |

The simplest approach is to have the LLM output the full content of each split section as-is:

[Input] "1. Purpose of the Competition\nProviding designers with opportunities..."

[Output] {"heading": "1. Purpose of the Competition", "text": "Providing designers with opportunities..."}

↑ Nearly a verbatim repeat of the input

Since Output ≈ Input, it was obvious that for a 54-page document, output alone would generate tens of thousands of tokens. In a pricing structure where output costs 5x more, over 80% of total costs would come from output.

The Solution: Integer Index References

The core idea is simple:

Have the LLM output only the start/end indices of the text, and look up the original text directly on the server.

Seeing the actual data makes this immediately clear:

[LLM Input — 78 lines, 3,897 tokens]

[page 3]

0 <<h:15.0pt>> 1. 공모의 목적

1 <<p:12.0pt>> 설계자들에게 참여의 기회를 제공하고 공정한 경쟁을 통하여 우수한 설계안(공모지침에

2 <<p:12.0pt>> 충실하면서도 청사의 외관은 부지 주변의 경관과 조화를 이루고...

...

5 <<h:15.0pt>> 2. 사업의 개요

6 <<p:13.0pt>> 가. 건물의 명칭

7 <<p:12.0pt>> 대구법원종합청사

...

31 <<h:15.0pt>> 3. 공모 요강

...

(78 lines total across 3 pages)

[LLM Output — 190 tokens]

[

{"heading": "1. 공모의 목적", "start": 0, "end": 4},

{"heading": "2. 사업의 개요", "start": 5, "end": 30},

{"heading": "3. 공모 요강", "start": 31, "end": 43},

{"heading": "마. 심사", "start": 44, "end": 67},

{"heading": "바. 참가 보수", "start": 68, "end": 74}

]

A heading name + two integers. The server retrieves the original text directly via line_list[start:end+1], so the LLM never needs to output it again.

Measured Results

We processed pages 3–5 using Claude Haiku 4.5 (Bedrock Converse API) with both approaches. The input text was identical — only the prompts differed between “output full section content” and “output indices only.”

| Full Text Output | Index Reference | Reduction | |

|---|---|---|---|

| Output tokens | 1,865 | 190 | 90% |

| Latency | 16.8s | 2.2s | 87% |

| Cost | $0.0123 | $0.0048 | 61% |

Reducing output tokens doesn’t just cut costs. LLM response time scales with the number of tokens generated, so when output drops from 1,865 to 190, latency also drops from 16.8s to 2.2s — an 87% reduction. A 14-second difference across 3 pages translates to minutes of difference across a 54-page document.

Cost savings matter, but the several-fold reduction in document processing wait time makes an even bigger difference for user experience.

Implementation Details

Generating Indexed Annotated Text

def page_to_indexed_text(page_data: dict, line_counter: int = 0):

"""Structured text → integer-indexed annotations (for LLM input)"""

output_lines = [f"[page {page_data['page_num']}]"]

line_list = []

for block in page_data["blocks"]:

for line in block["lines"]:

first_span = line["spans"][0]

size, bold = first_span["size"], first_span["bold"]

is_heading = bold or size >= 14

style = "h" if is_heading else "p"

suffix = ",bold" if bold else ""

tag = f"<<{style}:{size}pt{suffix}>>"

text = " ".join(s["text"] for s in line["spans"])

line_list.append({

"text": text, "page": page_data["page_num"],

"is_heading": is_heading, "bbox": _union_bbox(line), # omitted

})

output_lines.append(f"{line_counter} {tag} {text}")

line_counter += 1

return "\n".join(output_lines), line_list, line_counter

We store the original text, page number, and bbox in line_list, while appending index numbers and font annotations to the text sent to the LLM. When the LLM returns {"start": 5, "end": 30}, the server simply retrieves line_list[5:31].

Prompt

TEXT_CHUNKING_PROMPT = """<role>

건축 관련 고시/지침 PDF를 분석하여 논리적 섹션으로 분할하는 전문가입니다.

</role>

<input>

각 라인 앞에 0, 1, 2 같은 인덱스 번호가 부여되어 있습니다.

섹션의 시작과 끝 인덱스만 지정하면 됩니다.

</input>

<output_format>

JSON 배열만 출력하세요.

[{{"heading": "섹션 제목", "start": 시작_인덱스, "end": 끝_인덱스}}]

</output_format>

<text>

{text}

</text>"""

Applying the Same Pattern Beyond Text Pages

This index reference pattern applies equally to table pages and mixed (text + table) pages — not just text pages. The format varies, but the principle stays the same:

| Page Type | Index Given to LLM | LLM Output | Server Reconstruction |

|---|---|---|---|

| Text | Line index 0, 1, 2... |

start, end |

line_list[start:end+1] |

| Table | Table/row index [Table 0], 0:, 1:... |

index, header_row |

tables_data[index][row] |

| Mixed | Block ID [B0], [B1]... |

block_refs: [0, 1] |

page_data["blocks"][i] |

For table pages, we extract cell data with PyMuPDF’s find_tables() and assign row indices. For mixed pages, we assign [B0], [B1]... IDs to each text block. Regardless of page type, the LLM references positions using integers, and the server looks up the originals.

Bonus: Precise PDF Highlighting

Because we store not just text but also bounding boxes (coordinates) in line_list, we can immediately calculate the exact PDF region for any section using the index range from the LLM output. With the full text output approach, we would have had to re-match the LLM output against the original PDF, but with the index approach, we simply look up line_list[i].bbox.

Below is the result of overlaying the bounding boxes of the 5 sections from the PoC onto the actual PDF. Each color corresponds to a section, and sections that span across pages (e.g., “2. Project Overview” spanning pages 3–4) are tracked accurately.

Summary

| Full Text Output | Index Reference | |

|---|---|---|

| Output tokens | 1,865 | 190 (90% reduction) |

| Latency | 16.8s | 2.2s (87% reduction) |

| Cost | $0.0123 | $0.0048 (61% reduction) |

| Hallucination risk | Text may be altered | Uses original text as-is |

| Implementation complexity | Simple | Requires indexing preprocessing |

When using an LLM to classify or split documents, asking it to “just tell me where” instead of “rewrite the result” can dramatically reduce output tokens. Because both cost and response speed decrease together, the impact is amplified under the current pricing structure where output costs several times more than input.

This technique is implemented and running in production as part of the document preprocessing pipeline for an architecture regulation review AI system, built with PyMuPDF + Claude Haiku.